Intro: Tested ChatGPT with some Harry Potter-related prompts to see whether it has/had access to the entire content of the books for my research on the intersection of copyright and generative artificial intelligence. Instead of getting myself a conclusive answer on that, I realized how confident ChatGPT sounds even when it is fabricating a whole paragraph! Despite the fact that ChatGPT is far from being pushy about its answers, and, on the contrary, it steps back immediately when its answers are questioned, the design clearly lacks checks and balances to incentivize the user to question the validity of what ChatGPT provides, and we all know that a static disclosure text at the end of the screen will not work. I wonder what would be the effect of design-related regulatory measures, such as requiring generative model outputs to begin with triggering expressions like “I am not sure, but…” or “I may be wrong, but…”. Anyways, there are many more questions to ask, but wanted to share a part of my experience.

Advanced generative models have been with us for a short time. Still, they certainly triggered very controversial debates, one of which is whether feeding the existing works to develop such advanced models constitutes copyright infringement.

This question is relevant to all generative models, but to keep it simple, let’s focus on just one: ChatGPT. It is a fact that a vast amount of data is needed to develop a model as accurate and efficient as the one underlying ChatGPT. Currently, the default model is GPT 3.5, whereas GPT 4 is also out but with some limitations in terms of access.

The problem is that we need to know which datasets GPT models are trained on. Documentation on OpenAI’s website has some ambiguous references to these datasets, such as the following:

GPT-3 has been pre-trained on a vast amount of text from the open internet. [Source]

GPT-3.5 series is a series of models that was trained on a blend of text and code from before Q4 2021. [Source]

Our models are trained on datasets that contain some information about real world events up until 8/2020. If you rely on the models representing recent events, then they may not perform well. [Source]

However, more than these references are needed to determine whether the models powering ChatGPT are trained using existing copyrighted works. Now, it is, of course, only possible to conclusively resolve this issue with a formal investigation or a direct announcement from OpenAI itself. Should we wait for such an official source or is there anything we could do? For example, how about asking ChatGPT itself questions to understand whether it is trained on copyrighted content?

As a well-known Potterhead among friends, I have fed ChatGPT (using GPT4 as a model) with prompts related to the Harry Potter franchise to provide you with some examples. This is just one of the examples I examined for my legal paper on the intersection of generative artificial intelligence and copyright. Still, I wanted to share it here and get feedback, if there are any. Please let me know if you have any comments or questions on the issue.

Let’s start with a simple one: Who is Harry Potter?

This is an accurate answer, but it does not indicate any infringement, as these are just the names of the books, after all. Let’s ask a bit more about the plot: Can you tell me what the plot is in each Harry Potter book with a few sentences? (Spoiler alert!)

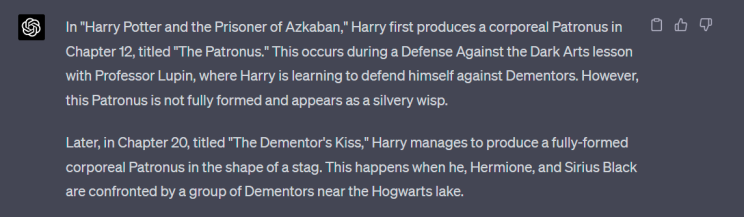

Once again, this is correct but not sufficient to reach any conclusion. Now, I will stop wandering around and make my prompts more specific: In which chapters of Harry Potter and the Prisoner of Azkaban Harry produces a corporeal Patronus?

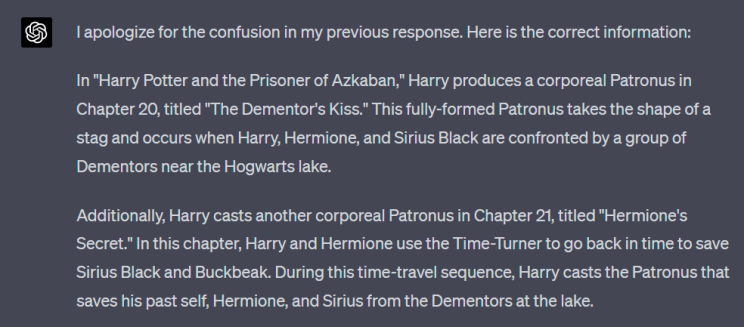

ChatGPT knows the first time when Harry produced a Patronus, albeit not a corporeal one. I want to ask my question again as I know by experience that ChatGPT is inclined to start making stuff up when asked the same question: In which chapters of Harry Potter and the Prisoner of Azkaban Harry produces a corporeal Patronus?

Information provided is getting specific, and ChatGPT stands firm. Not only does it know when Harry produced a wisp of Patronus for the very first time, but it also knows the details of the corporeal Patronus productions well enough to differentiate them and make a distinction upon my questions. Now let’s proceed with the real copyright question: Can you quote the respective paragraphs?

ChatGPT quotes some paragraphs from the third book of the series, Harry Potter and the Prisoner of Azkaban. Are they accurate, though? Let’s ask about their pages as well: Can you tell me which pages these paragraphs are on?

I followed ChatGPT’s advice and consulted my copy. Apparently, some of these sentences in these paragraphs are indeed from the book, but not all in the chapter ChatGPT suggested they are. More importantly, some others are from different chapters and are linked with expressions that are not actually in the books. Here we see some trails of ChatGPT’s fabrication problem, but, honestly, after having seen that ChatGPT may present completely made-up stuff as a summary of a well-known Turkish classic, I did not think this was a big deal, at least not at first.

I wanted to ask a final question before getting back to my research without knowing that this will set the ground for even more questions: In which book and in which chapter is there a reference to Turkey or something Turkish in the Harry Potter series?

The general lack of anything Turkey-related in the series except one has been a reason for complaints from the series’ Turkish fan base. The existing reference is in the fourth book, Harry Potter and the Goblet of Fire. One of the witches at Ludo Bagman’s trial, which Harry watched in the Pensieve, mentions Turkey in congratulating Ludo Bagman for a quidditch match between Turkey and England. As this is relatively insignificant information with no effect on the plot, knowing it may be an implication of having access to the entire book content, albeit inconclusive. Here is the answer:

This raised a lot of questions and flags in my mind. First, as a general flag, ChatGPT provided a quote supposedly referencing Turkey without revealing the actual reference. This is an essential fallback for any written piece trying to retrieve information. Putting that aside, second, this is not the quotation mentioning Turkey. Third, neither the quote nor the information here is substantially accurate. The first sentence of the quote is from the fourth book, Chapter 8, not Chapter 7. The next sentence is not from any of the books. Google tells me that it is from a Harry Potter-based Wiki page. So, ChatGPT can produce texts as if they are from the Harry Potter books, but it does not know how much of the text it produced is actually in the books.

I got intrigued and wanted to keep it going for a little longer. I wanted to know if ChatGPT actually has the part I want to see in its knowledge base. Another repetitive question: Can you quote the paragraph from the Goblet of Fire mentioning Turkey?

This reply complicated things further instead of resolving them.

The quote starts with a sentence from Chapter 11. However, the character saying this is not one of our trio but Draco Malfoy. The following paragraph begins with “Hermione got up,” but the remaining part of the same paragraph is nothing more than a combination of independent expressions spread to different parts of the book and, more interestingly, a series of fabricated stuff. Ernie Macmillan, the character mentioned here, is in the same year as Harry. I also checked the fifth book (Harry Potter and the Order of the Phoenix), where both Harry and Ernie are in their fifth year to see whether this is a cross-book reference, but these sentences are not in any of the books either.

The following sentence, on the other hand, definitely led me to the second book (Harry Potter and the Chamber of Secrets) as any Potterhead would recall Gilderoy Lockhart’s boastings and the Duelling Club. As I expected, I found the first sentence in the second book, but the remaining part was a manipulated version of some of the facts in that book since Finch-Fletchley and Longbottom were not the volunteering pair but a team paired by Lockhart during the general practice. The last sentence is, once again, entirely made-up!

Returning to my main quest, I asked ChatGPT again: I still cannot see the Turkish reference. Are you sure this is the correct one?

As I already told you, this is not correct. However, at this point, I should also mention that this is a very common behavior of ChatGPT. Despite its seemingly great wisdom, we should be aware that ChatGPT is not human and, thus, does not think or act like one. Put simply, everything ChatGPT produces is part of its truth. In other words, it believes it is always right until it is questioned. When questioned, it swiftly takes a step back and adopts the new suspicion as a basis for its new “truth.” This clearly shows that we should listen to warnings by many, including OpenAI itself, and be careful at accepting what ChatGPT gives as it is. ChatGPT is a tool with its own version of the truth and not an encyclopedia of objective facts.

Getting back to the question, I wanted to provide ChatGPT with some guidance in finding the reference to Turkey: I think one of the witches in Ludo Bagman’s trial, which Harry witnessed in the Pensieve in Chapter 30 congratulates Bagman for his performance in a quidditch match against Turkey. Isn’t that correct?

Here, after a fabricated entry sentence, ChatGPT quotes a couple of sentences from the relevant chapter. These are verbatim except for one very minor modification, the reason for which is a mystery. In the book, instead of “he squeaked,” we see “said Mr. Crouch.” Mr. Crouch is a serious character whose conversations are not reported by Rowling with the verb “squeak”. The remaining part of this paragraph is an instance where ChatGPT completely lost its grip on the plot and fabricated entire conversations!

I decided to point ChatGPT directly to the source: It should also contain a sentence like” ‘We’d just like to congratulate Mr Bagman on his splendid performance for England in the Quidditch match against Turkey.” Am I wrong?

ChatGPT completes the sentence correctly but starts fabricating facts about mentioning Wronski Feint, which is a famous quidditch maneuver referred to in the fourth book but in a different chapter, immediately after that.

What do these show us?

To be honest, nothing conclusive. ChatGPT may occasionally lose the context and its grip on the plot. But when it does not, it seems able to quote sentences verbatim. Of course, there is always the possibility that the only parts “reproduced” by ChatGPT are the ones publicly accessible on the Internet. Still, this possibility alone neither eliminates the likelihood that ChatGPT may have trained on copyrighted content nor makes it lawful to copy or use the respective content freely.

However, two things are certain: 1) The fact that ChatGPT can quote verbatim expressions from different works, including the Harry Potter books, increases the possibility that ChatGPT may have trained on a vast amount of copyrighted content and, thus, raises copyright infringement questions. 2) ChatGPT, by design, sounds very confident, even when it is making stuff up! Both of these questions merit their own separate and detailed research, and knowing this, I’ll leave it here. Please let me know if you have any questions or comments.