AI has become an essential part of modern enterprise operations. Legal teams now rely on it to handle complex and high‑volume tasks such as document review, contract analysis, investigations, and regulatory response.

These capabilities improve consistency, reduce manual workload, and support faster decision making.

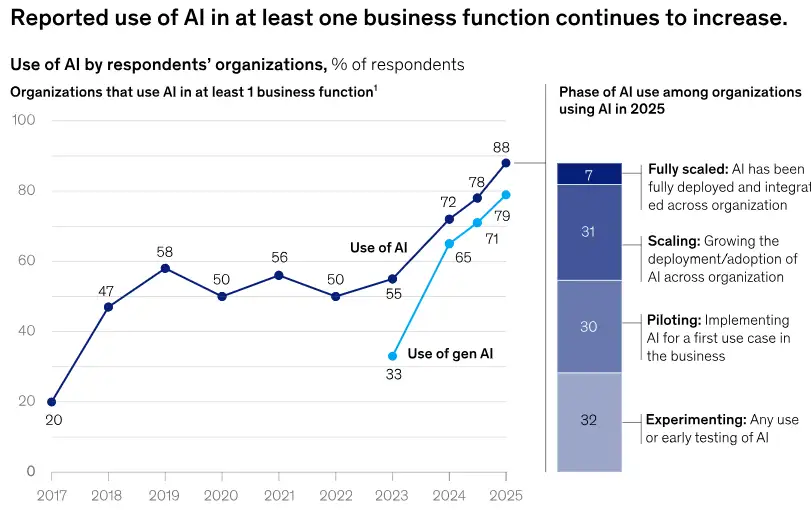

What makes the current landscape different is the speed at which organizations are adopting AI. According to McKinsey’s State of AI 2025 survey, 88 percent of organizations now use AI in at least one business function, and the use of generative AI continues to grow alongside it.

This widespread adoption shows that AI is moving beyond isolated use cases and becoming part of everyday business processes across the enterprise. At the same time, many organizations are still working to standardize how AI is integrated into their operations.

Source: McKinsey

As AI becomes embedded in legal operations, it influences how information is interpreted, organized, and presented across a matter’s lifecycle.

Legal work requires precision, clarity, and defensibility. Because of this, the question is not whether to use AI, but how to use it in ways that inspire trust. Strong governance and transparent practices are now central to whether legal teams feel confident relying on AI driven assistance.

The Growing Importance of Ethical AI in Legal Operations

Legal professionals operate under strict duties, regulatory expectations, and confidentiality obligations. Every decision, interpretation, or output may face review during litigation, audits, or regulatory inquiries.

When AI supports these legal workflows, it influences how information is prioritized, categorized, and understood, even though final decisions remain with legal professionals.

AI can guide early case assessments, shape viewpoints on evidence, or influence the direction of legal strategy. If accuracy, clarity, or fairness is compromised, the impact goes beyond workflow efficiency. It can affect defensibility, risk exposure, and reliability.

Four core expectations continue to define responsible AI adoption in legal environments:

Accuracy and reliability

Legal work depends on consistency and precision. AI assisted workflows should deliver dependable results that support these standards. If outputs deviate from expected quality, they can disrupt the legal process.

Fairness and bias awareness

Models learn from historical data. When that data contains gaps or skewed patterns, the model may reinforce them. Regular validation and monitoring help keep assessments balanced.

Transparency and explainability

Legal teams must understand how AI reaches its conclusions. Without this clarity, it is harder to justify or defend outputs when questions arise during reviews or proceedings.

Human accountability

AI elevates professional judgment while staying in a supporting role. Final decisions rest with legal practitioners, who maintain the human oversight needed at every step of the workflow.

Together, these expectations support responsible use and help AI function as a trusted partner rather than a source of uncertainty.

Expanding Compliance Expectations

The regulatory landscape around AI is changing quickly. Sectors that manage sensitive or regulated data, including legal services, face heightened expectations.

Organizations must show that their data practices are secure, consistent, and governed. They must also demonstrate that AI supported processes are transparent, traceable, and compliant.

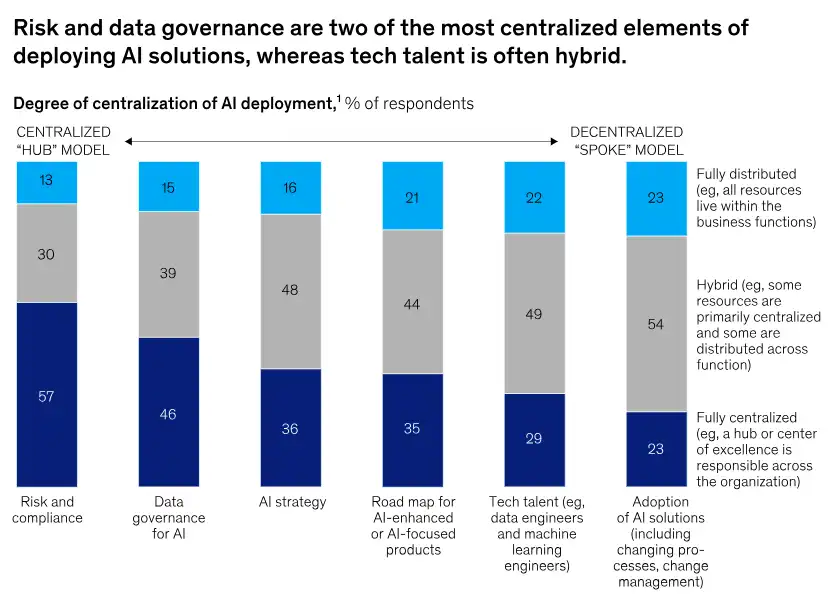

Enterprise trends reflect this shift. Many organizations are centralizing risk, compliance, and data governance functions to prepare for new laws and standards related to AI oversight.

Source: McKinsey

McKinsey McKinsey’s 2025 research shows that governance functions remain a clear priority for organizations deploying AI, with 57 percent reporting fully centralized risk and compliance and 46 percent reporting centralized data governance for AI.

This emphasis on centralization reflects growing concerns around accuracy, compliance, and oversight, underscoring the need for stronger governance.

Several themes now define AI governance expectations in legal settings:

Data privacy and protection

Legal data contains personal, sensitive, and privileged content. Strong safeguards are essential to prevent exposure or unauthorized access.

Data residency and cross border control

AI processing must follow jurisdictional restrictions on where data resides and how it can be transferred. These rules are critical in global matters.

Auditability and recordkeeping

Teams should be able to track how data was used, how outputs were generated, and which steps influenced final conclusions. Clear records strengthen defensibility.

Third party risk management

Vendors that provide AI capabilities must meet enterprise‑grade security, governance, and transparency standards. Legal teams need visibility into how data flows through these platforms.

Clients, regulators, and partners now expect greater clarity. Trust in legal services is closely tied to how openly organizations communicate their AI governance approach.

Understanding the Key Risk Areas in Legal AI

As AI becomes more integrated into legal processes, several risks demand attention. Awareness of these areas supports responsible adoption without compromising legal integrity.

Data quality and context limitations

AI relies on the quality and relevance of training and input data. Outdated, incomplete, or biased datasets can distort outcomes. In legal work, even small inaccuracies can shift priorities or cause misinterpretations.

Limited explainability

Some models use complex, layered methods that make it hard to see how a specific result was produced. In legal environments, where defensibility matters, limited explainability can present challenges.

Confidentiality and security concerns

Legal data must be handled with extreme care. When AI processes information in environments without strong controls, the risk of unintentional disclosure increases.

Overdependence on automation

AI offers efficiency, but legal reasoning requires nuance. Relying too heavily on automated outputs may cause teams to miss subtle details or context specific factors.

Vendor transparency

Legal teams need to understand how external AI platforms are trained, secured, and maintained. Limited visibility raises compliance risk and makes reliability harder to assess.

Recognizing these risks strengthens internal oversight and supports more confident adoption.

Transparency as the Basis for Trust

Trust is central to any legal workflow that involves AI. Transparency builds that trust by making AI’s role clear and understandable.

Effective transparency includes:

- Communicating where and how AI contributes within a workflow

- Maintaining documentation that shows governance and oversight

- Demonstrating how AI influenced a decision when questions arise

- Ensuring that confidential data remains in protected environments

Organizations that invest in transparency tend to see stronger adoption within their teams. They also gain greater confidence from clients and regulatory bodies that expect accountability.

Ethical AI as a Foundation for Sustainable Innovation

Legal teams face higher data volumes and increasingly complex compliance requirements. AI offers meaningful support, but long-term value depends on responsible use.

Ethical governance helps organizations:

- Reduce legal and operational risk

- Strengthen the defensibility of outcomes

- Build trust with internal and external stakeholders

- Expand advanced capabilities in a controlled manner

- Keep innovation aligned with professional and regulatory expectations

Responsible use does not slow progress. It creates a stable foundation that enables sustainable innovation across legal operations.

The Future of AI in Legal Tech

AI capabilities will continue to advance across legal operations, and expectations for accountability, explainability, and oversight will rise alongside them. Legal teams will evaluate AI not only on performance but also on how well it aligns with risk, compliance, and data protection standards.

Recent findings from the American Bar Association’s 2025 Legal Industry Report show that individual use of generative AI has increased to 31 percent, while firm‑wide adoption remains more cautious due to policy and ethical concerns. This trend reflects a future where ethical alignment shapes adoption decisions.

Legal organizations are moving toward evaluating AI based on:

- Explainability and control

- Data security and privacy safeguards

- Integration with enterprise risk management

- Ongoing monitoring and validation

As technology choices become more closely tied to trust and defensibility, organizations that embed ethical principles into their AI strategy today will be better equipped for evolving regulations and rising stakeholder expectations.

Conclusion: Trust Will Define the Value of Legal AI

AI has the power to reshape legal operations, but its long-term value depends on more than automation. Trust, supported by ethics and strong governance, will remain the defining factor.

As legal teams move deeper into digital transformation, responsible use of AI will become the foundation of credible, secure, and future ready legal operations.

The post Navigating AI Ethics and Compliance in Legal Tech: Building Trust in an AI Driven Legal Environment appeared first on Knovos.