I just returned from the IAPP Global Privacy Summit, and there was a lot to absorb. Sessions covered AI governance, children’s safety, advertising compliance, international data law, and more.

There is SO much that we learned at IAPP that we can’t possibly put it all into this one newsletter, so stay tuned for part 2 next week, where I’ll share more about what I heard at the IAB Public Policy Summit and the IAPP US State Workshop.

Here’s what stood out from the Red Clover team. In addition to learning, networking with privacy pros, drinking coffee and matchas from our partners on the vendor floor, collecting various stuffed animals like pandas, ostriches and octopuses, we also had fun!

For those who like all my graphics, I’ll warn you that this week is really content heavy. Grab your cup of coffee/tea/favorite drink and happy reading.

The Opening: On Change and Who We’ll Be

Maya Shankar opened with a simple but powerful idea: we recognize how much we’ve changed in the past, but we rarely extend that same thinking forward. We assume the person facing tomorrow’s challenges is the same person we are today. She made the case that the stress of feeling unprepared can itself become the obstacle to building the tools you need. A more gracious view of your future self might be exactly what lets you take the steps available right now.

Salman Rushdie offered a different lens on privacy and that even good things require exposure. Healthcare, security, and public life all come at a cost to privacy. What we share publicly tends to be a sanitized, idealized version of ourselves, which creates a kind of competition that leaves people feeling more isolated even as they share more.

Kent Walker from Google demonstrated Google’s Personal Intelligence features, which is AI with deep access to personal information to surface proactive suggestions. The room was left with real questions: What does informed consent look like at this level of access? And when a feature uses one person’s data to surface information about someone else, such as a friend or a contact, what are the consent implications for that second person?

What Boards Actually Want to Hear

Boards want strategy and risk. Not operations. The question they want answered: What’s happening, why does it matter, what are you doing about it, and what do you need from me?

The moment a presentation slides into task lists and compliance checkboxes, you’ve lost the room. What boards do want: the regulatory landscape, what peers are dealing with, and where business strategy and privacy risk intersect.

In 2026, boards are specifically asking about AI – whether the company is adopting it responsibly, keeping pace with peers, and whether the data being fed into AI systems is actually fit for that purpose.

Practical advice from the panel:

- Put detail in the appendix. Slides need to tell a story, not display walls of small text.

- Frame uncertainty as a range of outcomes, not open-ended unknowns. Show controls in place and where you need board input.

- Don’t wait for the quarterly cycle if something significant happens. Enforcement actions, peer breaches, and major regulatory shifts warrant an interim conversation.

- If you use AI to prepare board materials, you own the output. Review everything. You are responsible for what you present.

Online Advertising: A Complicated Compliance Maze

The all-party consent wiretapping map is bigger than people think. Most businesses think of California and Florida when it comes to states requiring all-party consent for recorded calls. The full list: California, Florida, Washington, Montana, Michigan, Illinois, Connecticut, Pennsylvania, Maryland, Massachusetts, Delaware, Nevada, and New Hampshire. If you record calls, check your coverage.

Cookie consent requirements are expanding in the U.S. Connecticut, Delaware, Maryland, Montana, New Hampshire, and Florida, along with California, require careful attention to first-party cross-context behavioral advertising cookies. At the IAB Public Policy session, cookies were a very hot topic, and I’ll share more next week.

EU enforcement has shifted from banner design to technical behavior. France fined Google €325 million and Shein €150 million for cookies firing before consent and invalid consent mechanics. The Netherlands issued 50 warnings for misleading banners and dark patterns. German courts now require “reject all” to be as prominent and easy as “accept all.” Belgium ruled that the IAB consent string itself constitutes personal data, and designated IAB Europe as a joint controller. The message: get the technical implementation right, not just the visual design.

California is focused on the gap between expressed consent and what actually happens in the technology. GoFan was fined $1.1 million for forced consent, selling student data, and failing to provide no meaningful opt-out. Regulators are looking at whether the tech actually executes what the policy says.

GPC is about to get much bigger. By 2027, California will require browsers to include the Global Privacy Control by default. Infrastructure that isn’t ready for that volume of opt-out signals needs attention now.

Updated COPPA rules take effect April 22, 2026. Changes include an expanded data definition, a ban on targeted advertising to children, new data retention limits, stricter parental consent, and new security controls. If your product touches anyone under 13, this is immediate.

AI is changing advertising itself, not just compliance. AI can now design ads, build campaigns, and understand context far more broadly. The bigger shift: consumers may soon have their own AI agents, and advertisers’ AI agents will need to market to those agents directly. Humans may step out of parts of that loop entirely.

Real Enforcement, Not Compliance Theater

CalPrivacy has a new Audit division which is responsible for ensuring companies are complying with CCPA.

California shared three core enforcement priorities: data minimization, dark patterns, and consumer privacy rights. These show up in enforcement action after enforcement action.

The FTC: Law Enforcement, Not Rule-Writing

FTC Commissioner Mark Meador was direct: the FTC is a law enforcement body, not a business regulator. They’re not trying to run your operations. They’re looking for harm in the marketplace and legal grounds to address it.

Key points from the conversation:

- On children: The agency is asking whether platforms are being operated fairly for kids, not just whether they’re technically compliant. Infinite scroll, addictive defaults, and engagement-maximizing design are on the radar. (Shameless plug – two juries did find Big Tech responsible for its trade practices and conduct. Hubby, co-podcast host and co-author, Justin, and I wrote about it in Bloomberg Law.)

- On deepfakes: Primarily being used for scams. The “Take It Down Act,” requiring removal procedures for non-consensual intimate imagery within 48 hours, is a top enforcement priority.

- On algorithmic accountability: The Commissioner explicitly rejected the idea that no one is responsible for what an algorithm does. Data deletion and algorithm modification are on the table as enforcement remedies for systems built on improperly collected data.

- On proactive engagement: There’s no formal guidance process for consumer privacy, but reaching out to the FTC in good faith before a problem exists will likely be weighed in your favor if an investigation ever occurs.

- Areas of focus: Mentioned the following areas of potential mechanisms for enforcement. Data deletion and requiring entities to change/modify algorithms that were based on data collected in violation of rules.

- On a national privacy law: 50 state laws is not a functional system. A single standard would be more efficient. But that’s Congress’s call. People always ask, what’s my view – it remains that it’s not likely to happen anytime soon.

India’s New Privacy Law Has Real Deadlines

India’s Digital Personal Data Protection Act (DPDPA) is not theoretical. The law is in effect as of November 13, 2025. Consent Manager registration is required by November 13, 2026. Full compliance is required by November 13, 2027.

What makes India’s approach different from GDPR:

- No sensitive vs. standard data distinction – the distinction is between entity types (standard Data Fiduciary vs. Significant Data Fiduciary, designated by the government based on volume and risk).

- Processors have no independent obligations – all must flow through contracts, making vendor agreements critical.

- Breach notification is fast: CERT India within 6 hours of discovery (no minimum threshold), Data Protection Board and affected individuals within 72 hours. Processors must report independently; this cannot be contractually shifted back to the controller.

- Consent notices must be available in all 22 recognized Indian languages – a significant operational challenge given the limits of current translation technology.

- Cross-border transfers are generally permitted, with exceptions for jurisdictions specifically blocked by the government (the list is not yet populated and is not expected to grow quickly).

If you process data of individuals in India or offer goods and services there, scope your compliance work now.

Adaptive Privacy: Governing in Real Time

With more uses of AI, it’s important companies have an AI governance program. Many privacy pros are often anointed AI governance owners. While hundreds of AI bills have been introduced, many privacy laws also cover AI disclosures and processing considerations (here’s looking at you my childhood home state of CT, along with CA, CO, UT, TX, and VA).

Static governance programs are no longer adequate for AI environments. Here’s the problem playing out in real time: a company approves an AI tool, completes assessments, and files documentation. Three months later, the vendor quietly updates the model. Same tool, same login, but different behavior, different data handling, and possibly adjusted safety guardrails. The documentation now describes a system that no longer exists. And because the tool still works, nobody noticed.

The realistic AI governance timeline:

- Month 3: Model drift. The vendor updated the model. Terms may have changed. Your documentation hasn’t.

- Month 6: Scope expansion. Teams are using the tool beyond its approved purpose. Data is flowing in ways the ROPA doesn’t reflect.

- Month 9: The tool may now be operating agentically, making decisions and interacting with other systems in ways the original governance framework never contemplated.

The solutions are monitoring-based, not documentation-based: technical tracking of model version numbers, behavioral benchmarking, automated terms monitoring, and LLM firewalls as a control layer that makes your AI policy part of the system rather than just a document.

The bottom line: you have to understand your technology stack well enough to govern it. You don’t need to be an engineer. But you need to know what’s actually happening.

How’s your AI Governance program? If you haven’t yet, now’s a great time to check out my LinkedIn Learning AI Governance course.

Age Assurance: A Global Regulatory Moment

Protecting children online is moving from aspiration to enforcement. Australia, France, and Malaysia have enacted social media restrictions. Brazil’s privacy regulator has become its safety regulator for children. About half the states have passed some form of age assurance requirements.

This is also an area of contention from various trade and free speech groups. Continue to see more laws passed to protect children, along with and more push back.

The four primary age assurance models: self-declaration (a checkbox is widely considered insufficient on its own), inference from behavioral signals, estimation from device or account data, and full verification via government ID (strongest, but most privacy-invasive).

New ISO standards – ISO 27566, Parts 1, 2, and 3 – now provide a framework for evaluating these implementations across accuracy, minimization, anti-circumvention, privacy, and accessibility.

One unresolved practical problem: age assurance signals don’t work well on shared or hand-me-down devices, which is exactly the device profile common in households with younger children. App store-level age assurance legislation is advancing, but this problem doesn’t have a clean solution yet.

Children, Personalization, and the Limits of One-Size Design

A developmental psychologist made a point that sounds obvious but has significant implications: children, tweens, teens, and young adults are not the same population.Treating them as a single category in product design or regulation is a flawed starting point.

Personalization can be genuinely valuable, especially in education, where adaptive systems can meet different learning styles. The problem arises when personalization is optimized purely for engagement, not for development.

Two data points that should inform every product decision touching minors:

- 37% of 3-to-5-year-olds have a social media account. Self-declaration of age is not working.

- 90% of parents say they care about their child’s privacy online. 80% reported bypassing their child’s privacy settings themselves. Child-facing privacy features that parents find inconvenient will be circumvented. Parental controls need to encourage conversation, not enable surveillance.

Privacy by design is essential for products that children will use. Performing privacy risk assessments and engaging with product, engineering, legal, compliance, and operations throughout the entire lifecycle is essential.

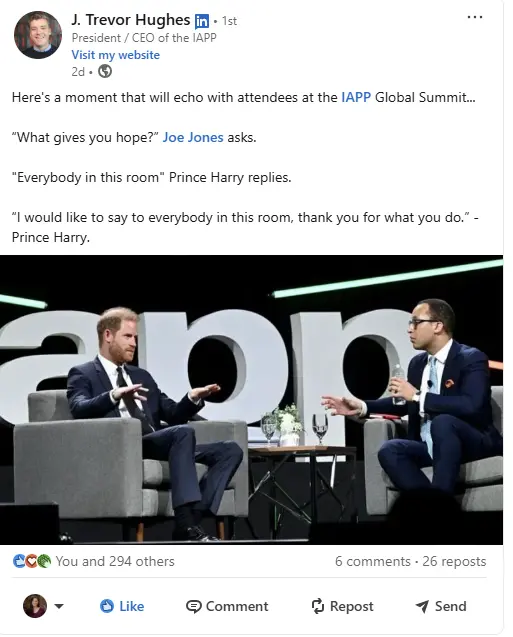

Royalty and Privacy

For some, Prince Harry was the highlight of the IAPP GPS. There are so many thoughtful summaries of Prince Harry’s remarks, it’s impossible to share them all here. I encourage you to search on LinkedIn for “Prince Harry IAPP” to find fellow colleagues’ remarks.

For anyone reading this newsletter wondering why you keep doing what may feel like climbing uphill, fighting for privacy resources or a seat at the table, asking tough questions, or pushing back on business leaders when they want to do something that might be questionable, know that your work is important. And Prince Harry thinks so too, as so well captured in Trevor Hughes’, CEO of IAPP, post.

I leave you with my response to Prince Harry’s faith in the important work of privacy pros:

For those celebrating Easter – Happy Easter! Those on spring break – safe travels. For everyone else – wishing you a great week ahead.

Stay tuned for more summaries on LinkedIn and in next week’s edition!

Jodi

When you’re ready, here’s how we can help:

When you’re ready, here’s how we can help:

Privacy Advisory & Implementation: We help companies navigate privacy requirements with confidence. Our advisory support covers strategy, operations, and real-world implementation.

Privacy Advisory & Implementation: We help companies navigate privacy requirements with confidence. Our advisory support covers strategy, operations, and real-world implementation.

Fractional Privacy Services: We provide fractional privacy leadership tailored to your needs and pace. From program development to day-to-day support, we help you build and sustain a strong privacy program.

Fractional Privacy Services: We provide fractional privacy leadership tailored to your needs and pace. From program development to day-to-day support, we help you build and sustain a strong privacy program.

Privacy Advisory & Implementation: We help companies navigate privacy requirements with confidence. Our advisory support covers strategy, operations, and real-world implementation.

Privacy Advisory & Implementation: We help companies navigate privacy requirements with confidence. Our advisory support covers strategy, operations, and real-world implementation.

Fractional Privacy Services: We provide fractional privacy leadership tailored to your needs and pace. From program development to day-to-day support, we help you build and sustain a strong privacy program.

Fractional Privacy Services: We provide fractional privacy leadership tailored to your needs and pace. From program development to day-to-day support, we help you build and sustain a strong privacy program.

The post Fresh From the Conference Floor: IAPP Global Privacy Summit 2026 appeared first on Red Clover Advisors.