Over the last few years, Finance and Accounting (F&A) functions have moved rapidly from experimenting with AI to embedding it across core operational workflows. In 2024, Gartner reported that 58% of finance teams were using AI, while KPMG’s latest Global AI in Finance study found that AI is now in use at 71% of organizations.

As AI becomes a baseline capability, CFOs are looking to accelerate investments. However, the speed of adoption cannot come at the expense of control, as F&A operations have low tolerance for volatility from risks such as model drift, divergent outputs, and ungoverned autonomous behavior.

The risks are significant for mid-market finance that operates with fragmented oversight between governance, risk, and execution, and lacks the resources needed to establish org-wide AI governance. For mid-market CFOs, the signal is clear: a governance-first framework is critical while implementing AI to drive sustainable outcomes without compromising control or audit integrity.

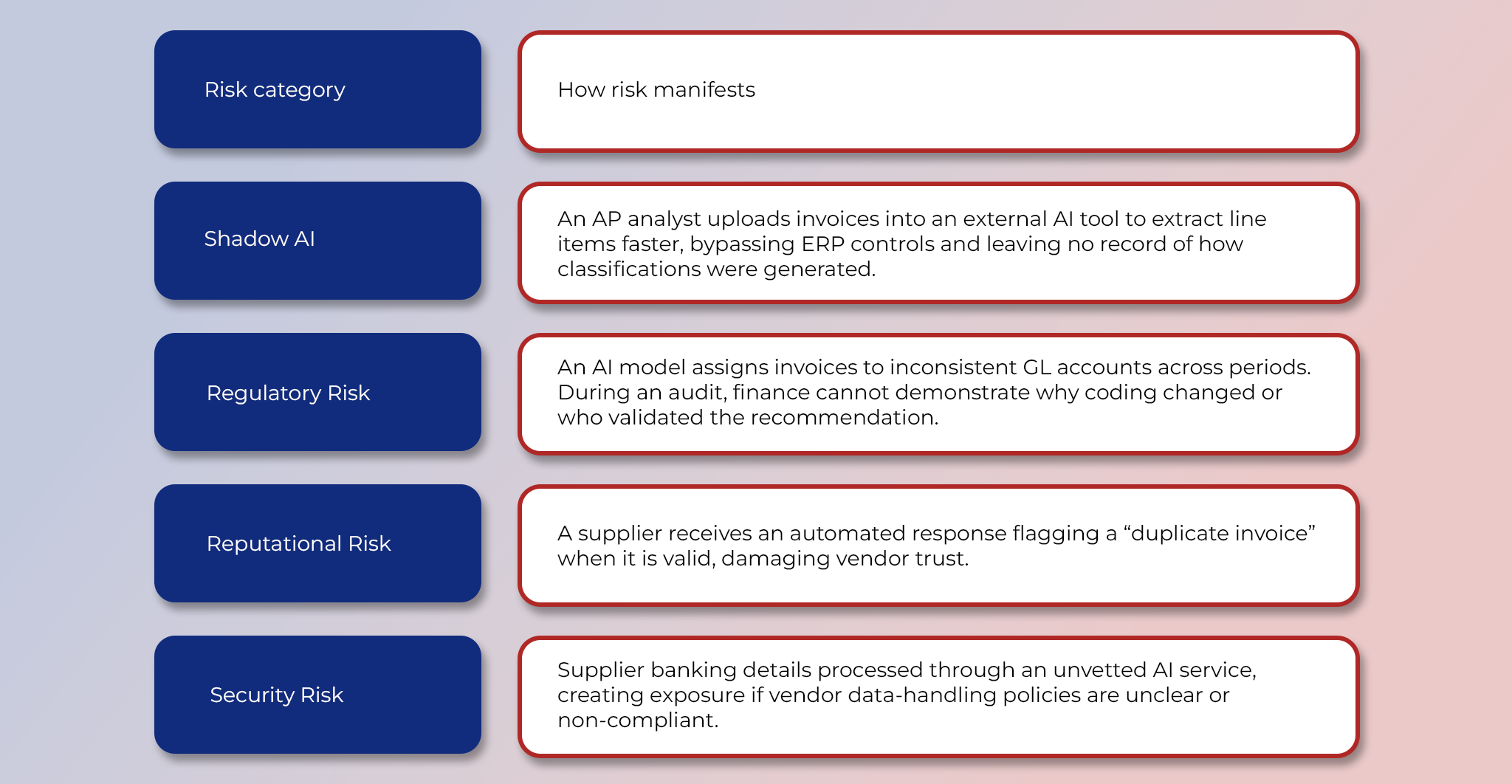

Understanding AI Risks in Mid-Market Finance

- Operational risk: Finance teams often adopt AI tools in an uncoordinated way during high-pressure cycles. Analysts’ use of LLMs may seem benign initially; vendors may embed AI features directly into the ERP; and F&A teams may layer point solutions for workflow automation. But without governance, it could result in inconsistent decision logic and limited visibility into where AI is influencing controllership activities.

- Regulatory risk: When AI supports finance execution by recommending accrual treatments, coding classifications, or reporting adjustments, weak governance creates accountability gaps that surface only during an audit. For instance, auditors may challenge why transactions were treated a certain way. Without clear evidence, finance teams struggle to defend their decisions.

- Reputational risk: Because finance workflows interact with suppliers, customers, and leadership stakeholders, AI failures quickly become visible externally. For example, inconsistencies in LLM-generated payment timing explanations can impact credibility in the market.

- Security risk: If third-party AI tools ingest sensitive financial data such as supplier banking details, payment schedules, and payroll inputs without strict contractual and technical safeguards, organizations risk leakage, improper data retention, or unauthorized access. Such exposures can be difficult to detect and costly to remediate.

How Risks Unfold Where Ungoverned Tooling Accelerates AP Workflows

Ungoverned tooling also leads to wasted spend. Model drift or higher exception volumes may affect the ROI from AI implementations. As more transactions fall out of the AI-enabled path, finance teams absorb additional manual rework, like reclassifying invoices or resolving mismatches.

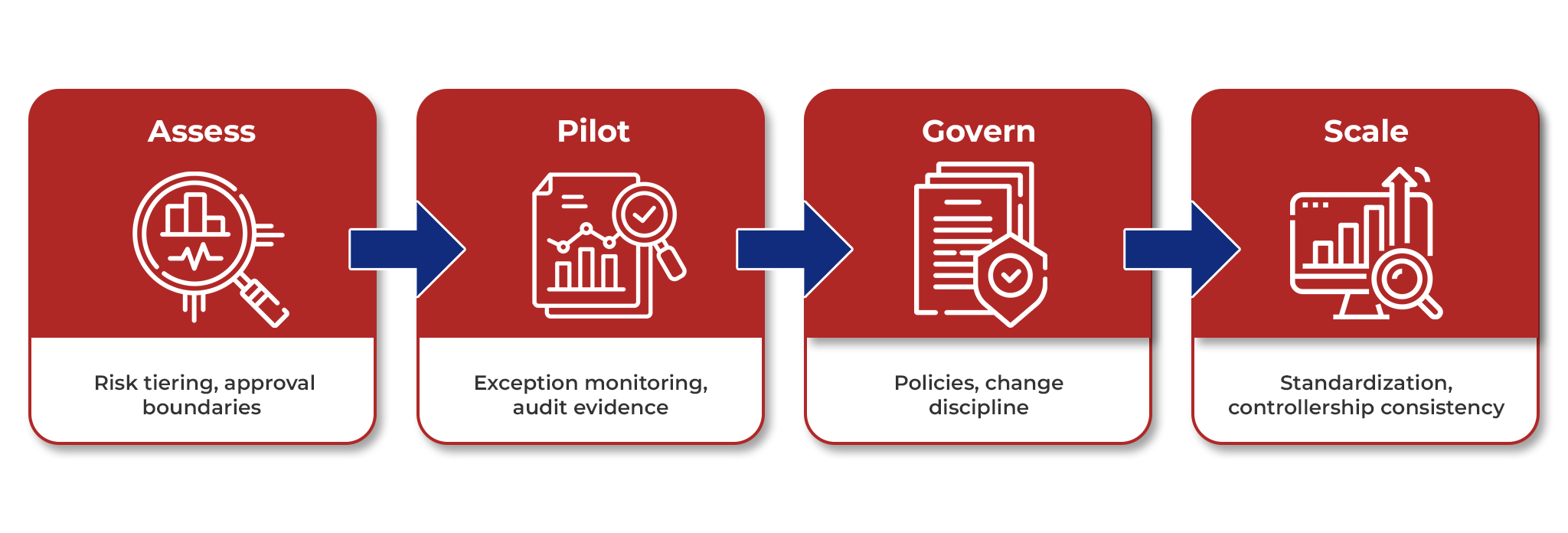

A 4-Step Governance-First Framework For Implementing AI in F&A

Assess

During the assessment phase, most CFOs focus on understanding viability and proving the RoI of an AI use case. An important question to address is whether the application can be adopted without weakening the control environment. For instance, an invoice auto-routing and coding solution starts posting entries without enforcing segregation-of-duties or maintaining a defensible audit trail. Finance may discover issues only when misclassifications surface during reconciliation or audits.

Therefore, CFOs should begin by prioritizing use cases based on financial materiality and execution proximity. It is important to distinguish between assistive applications (e.g., extraction or analytics support) and those that influence postings, accruals, or payment decisions.

This is also the right time to define initial risk tolerances along 3 key dimensions: model drift, explainability, and autonomy:

- Model drift: How much performance degradation is acceptable over time as vendor behavior, invoice formats, or transaction patterns change?

- Explainability: Determine what level of rationale is required for AI-assisted decisions, particularly for coding, accrual recommendations, or reporting support, so outputs remain defensible under audit and controllership review.

- Autonomy: Set boundaries on what AI can execute versus recommend, specifying where human approvals remain mandatory.

In this phase, external expertise can help mid-market finance teams accelerate outcomes without overbuilding internal capability. Cogneesol’s AI and machine learning solutions for intelligent document processing, workflow automation, and predictive analytics, can help finance teams identify and operationalize high-impact use cases while ensuring they are designed with controllership requirements and scalability in mind.

Pilot

When testing pilots, evidence of accuracy and efficiency is vital. Equally important is to look for signs of execution risks that typically emerge only at scale: such as transaction variability or inconsistent recommendations across categories. Success criteria should therefore include both performance metrics and stability indicators such as override rates, exception volumes, and the effort required to keep outputs aligned with policies.

Also, pilots should remain recommendation-first. AI may propose coding, routing, or matching actions, but postings, approvals, and payment triggers must stay under human control until outcomes are proven consistent. Importantly, audit evidence must be captured from day one through decision logs, override records, and clear linkage to ERP transactions, ensuring the pilot also produces defensible learnings beyond early wins.

CFOs need a unified view of where AI is at work across AP and close workflows, helping standardize exception handling, maintain audit traceability, and prevent fragmented tool adoption as AI scales across business units.

Govern

Build governance as a practical operating layer rather than an antagonist function that slows adoption and vetoes decisions. Mid-market finance teams do not need a standalone AI governance team, but finance-specific guardrails in place early. This includes defining:

- What AI is allowed to do (recommendation-only vs execution),

- Where human approvals remain mandatory, and

- What documentation must exist whenever AI influences coding, postings, or payments.

Accountability should be embedded into existing roles. For instance, AP and FP&A process leads can manage day-to-day exceptions, and CFOs should integrate AI checks into routines that already exist, such as close governance, vendor onboarding, and internal control reviews.

Baseline change discipline for AI tools

F&A teams should also know what changes as AI tools evolve. Before injecting updates into live systems, they should be run in a test environment using a small set of representative transactions to validate expected behavior.

Document for transparency

Governance should also be supported through standardized documentation: at a minimum, the scope of automation should be captured, along with the systems and data sources involved, where human approvals are necessary, and how exceptions are handled.

Scale

When pilots are being extended, mid-market finance teams should prioritize consistency rather than velocity. This is the stage when deployment patterns are standardized using the same exception logic, approval thresholds, documentation, and monitoring metrics each time AI is extended to a new workflow or business unit.

Expansion should remain incremental and risk-tiered. Low-impact assistive use cases can scale broadly, while solutions that affect coding, accruals, or payment readiness should be scaled only after outcomes have been stable across periods. As adoption expands, CFOs will need basic visibility into where AI is running and what tools are being used. Maintaining an inventory of use cases can help prevent tool sprawl and maintain predictable RoI on AI investments.

Continuously Optimize Your Finance AI Portfolio

Finance AI adoption does not end at deployment. The performance of tools must be sustained as transaction patterns, supplier behavior, and underlying ERP data evolve over time. A recent annual survey of CEOs by PwC found that while 33% of AI adopters saw benefits in either cost or revenue, 56% of companies reported no measurable financial gain.

Organizations may lose momentum after some early gains without a strong operational foundation. Reviewing AI-enabled workflows at a regular cadence is hence critical to catch model drift early, monitor exception volumes, and ensure automation remains stable through close, audit, and compliance cycles. Risk assessments should also be repeated periodically, since regulatory expectations, security threats, and vendor AI capabilities change continuously, requiring new mitigation measures even for mature use cases.

Frameworks such as Cogneesol’s ADIS can support governed AI adoption by providing an operational intelligence layer that enables adaptive, scalable process automation beyond one-time deployments. Ultimately, governance-first finance AI is not a static control exercise, but a continuous discipline – see how ADIS can support durable AI outcomes without compromising financial integrity.

The post A Governance-First Framework for Implementing AI in Mid-Market Finance appeared first on Cogneesol Blog.